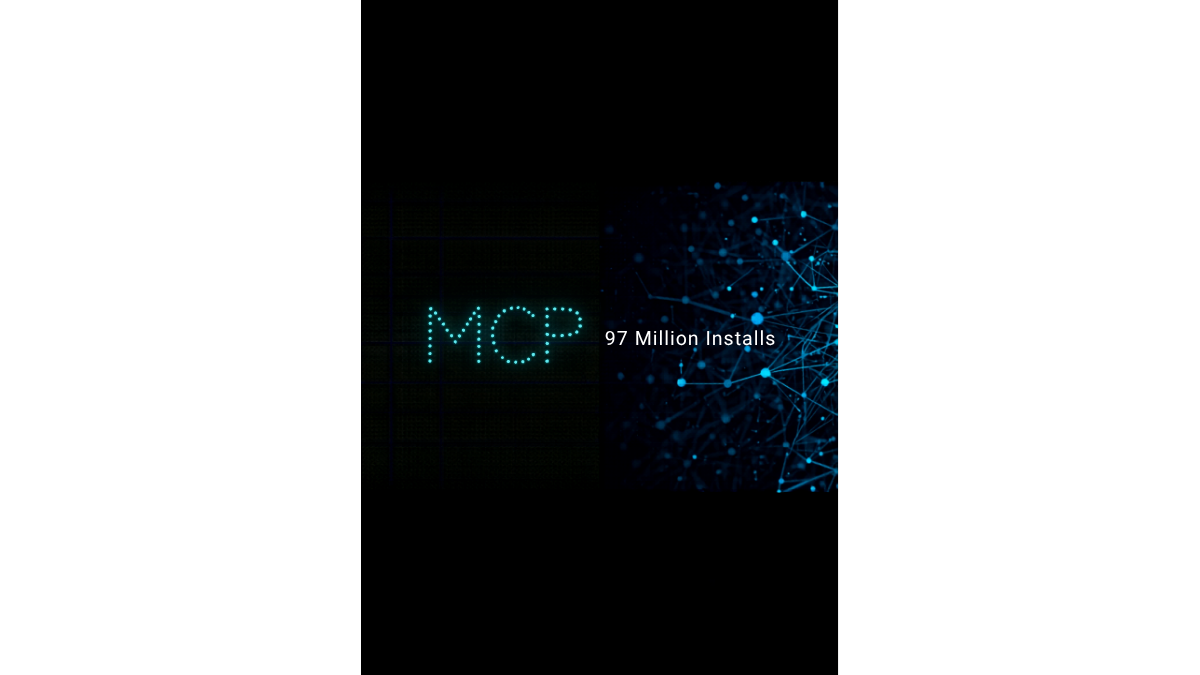

MCP Hit 97 Million Installs. The Protocol War Is Over.

Your AI Tools Are About to Get a Lot More Useful

Every AI assistant you use is about to stop being a fancy autocomplete and start actually doing things. The reason is a protocol most people have never heard of.

Anthropic’s Model Context ProtocolAn open standard for connecting AI assistants to external data sources and tools, enabling them to access real-time information and take actions. Read more → crossed 97 million monthly SDK downloads in March 2026. For context, KubernetesAn open-source platform for automating the deployment, scaling, and management of containerized applications across clusters of machines. Read more → took nearly four years to reach comparable deployment density. MCP did it in sixteen months.

The Linux Foundation announced the Agentic AI Foundation (AAIF) to house MCP under open governance, alongside OpenAI’s AGENTS.md and Block’s goose. This is not one company’s project anymore. OpenAI, Google, Microsoft, AWS, and Cloudflare all ship MCP-compatible tooling. Over 5,800 community and enterprise MCP servers are live, covering everything from databases to CRMs to dev tools.

What MCP Actually Is

Most people encounter AI through a chat interface and assume the model is the product. It is not. The model is the reasoning engine. The product is everything the model can connect to.

MCP is the protocol that defines how AI models connect to external tools and data sources. It gives a model a standardized way to say “I need to query your database” or “I need to write to your calendar” or “I need to run a command in your shell” and have the target system respond in a format the model can use.

Before MCP, every AI integration was bespoke. Connecting Claude to your Notion database required Anthropic to build a Notion integration or you to write one from scratch, with custom JSONA lightweight, human-readable data format used to exchange structured information between systems, based on JavaScript object syntax. Read more → formats, one-off authentication handling, and code that broke every time either APIA set of rules and protocols that allows different software applications to communicate with each other and share data or functionality. Read more → changed. Multiply that by every tool you want to connect, and you have an integration surface that nobody can maintain.

MCP replaces that with a single protocol. Build one MCP server for Notion, and every MCP-compatible model can connect to it. Build one client in Claude Desktop, and it connects to every MCP-compatible server. The USB portA numbered endpoint on a device that identifies a specific application or service, allowing multiple network services to run on the same IP address. Read more → analogy holds because it is exactly right: one interface, everything plugs in.

How It Works Under the Hood

MCP uses a client-server architecture over a local socket or stdio transport. The model (or the application hosting the model) runs an MCP client. Each tool or data source runs an MCP server. The client discovers available servers, queries their capabilities, and invokes tools via JSON-RPC messages.

A minimal MCP server looks like this:

import { Server } from "@modelcontextprotocol/sdk/server/index.js";

import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js";

const server = new Server({ name: "file-reader", version: "1.0.0" });

server.setRequestHandler("tools/list", async () => ({

tools: [{

name: "read_file",

description: "Read the contents of a file from the filesystem",

inputSchema: {

type: "object",

properties: { path: { type: "string" } },

required: ["path"],

},

}],

}));

server.setRequestHandler("tools/call", async (request) => {

const { path } = request.params.arguments;

const content = await fs.readFile(path, "utf-8");

return { content: [{ type: "text", text: content }] };

});

const transport = new StdioServerTransport();

await server.connect(transport);The model sends a tools/list request, gets back a description of what the server can do, and then calls tools/call with arguments when it needs to use a tool. The server executes the action and returns structured data. The model uses that data to continue its reasoning.

That is the entire protocol. The complexity is in what you build on top of it, not in MCP itself.

The Three Resource Types

MCP servers can expose three kinds of resources to a model:

Tools are actions the model can invoke: run a query, write a file, send an email, make an API call. Tools have side effects. They change state in the world.

Resources are data the model can read: documents, database records, configuration files, log output. Resources are generally read-only. The model uses them to build context without taking action.

Prompts are templated instructions the server can inject into the model’s context: system prompts, workflow templates, contextual guidance. Prompts let server operators influence how the model reasons about their domain.

A full-featured MCP server for a task management system might expose tools to create, update, and close tasks; resources to read task lists, project details, and user assignments; and prompts that instruct the model to always check for blocking dependencies before marking a task complete. The model gets tools, data, and operational guidance from a single server connection.

The Competitive Landscape

Rivals exist. IBM has the Agent Communication Protocol (ACP). Google has Agent-to-Agent (A2A). Both emerged in 2025 as alternatives to MCP.

ACP targets enterprise workflows and is designed to handle more complex multi-agent coordination patterns. A2A focuses on agent-to-agent communication rather than agent-to-tool communication. Neither is wrong. But both are fighting adoption curves that MCP had already won.

97 million monthly installs is not a lead. It is a moat. Developer tooling network effects compound fast. When 5,800 MCP servers already exist covering your database, your CRM, your code repository, your calendar, and your filesystem, the switching cost of adopting a different protocol is the cost of rebuilding every integration you already have.

The Linux Foundation governance announcement matters because it removes the remaining objection for enterprise adoption. MCP is no longer an Anthropic project. It is an open standard with foundation backing, the same path that HTTP, DockerA platform that packages applications into containers, providing a standardized way to build, ship, and run software consistently across any environment. Read more →, and Kubernetes walked before it.

Why This Matters Right Now

If you are building AI-powered workflows, MCP is the integration layer you need to understand. Not “eventually” understand. Now.

The tooling is mature. The SDK is stable. The server ecosystem covers the tools most developers and teams actually use. Claude Desktop, Cursor, Zed, and VS Code all support MCP. The barrier to entry for running your first MCP server is about thirty minutes and a package install.

More importantly: MCP is the difference between AI that reasons about your work and AI that actually does your work. The gap between those two things is everything.

The BytesNation Angle

We have a full MCP series coming. Obsidian MCP for wiring your personal knowledge vault into Claude. Granola MCP for surfacing meeting transcripts during active sessions. Claude Code MCP for exposing homelab infrastructure to AI-driven automation. The tools we use every day in this lab all run on this protocol. You will see exactly how they work, hands on, with real configs and real results.

MCP is the thing that makes AI stop talking and start working. Pay attention.