Stanford Just Dropped AI's Report Card. The Numbers Do Not Care About Your Feelings.

Stanford Just Dropped AI’s Report Card. The Numbers Do Not Care About Your Feelings.

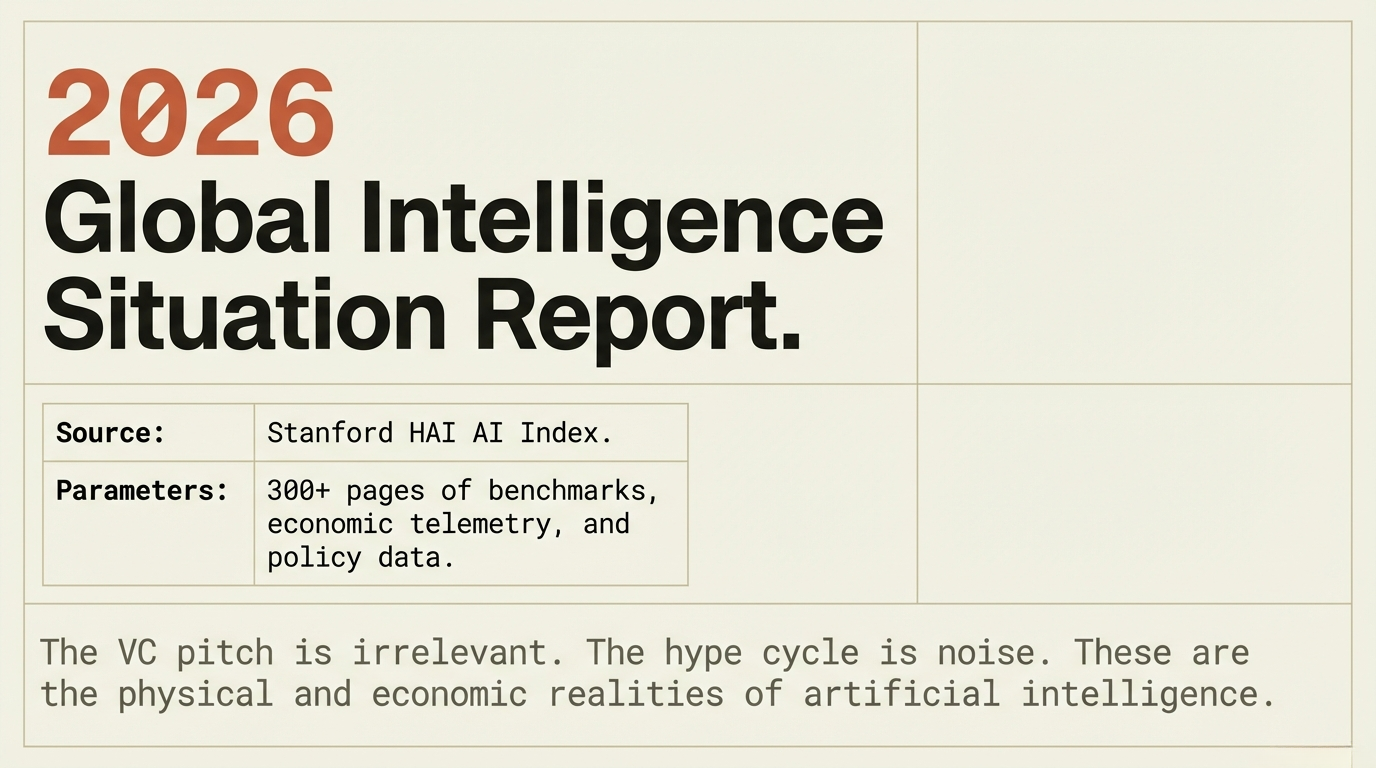

Stanford HAI publishes the AI Index every year. It is the closest thing the industry has to an objective, data-backed SITREP on where AI actually stands. Not the VC pitch. Not the Twitter hype cycle. The data.

The 2026 report dropped today. 300+ pages of charts, benchmarks, economic data, policy analysis. You are not going to read it. Here is what matters.

Models Are Outrunning Everything

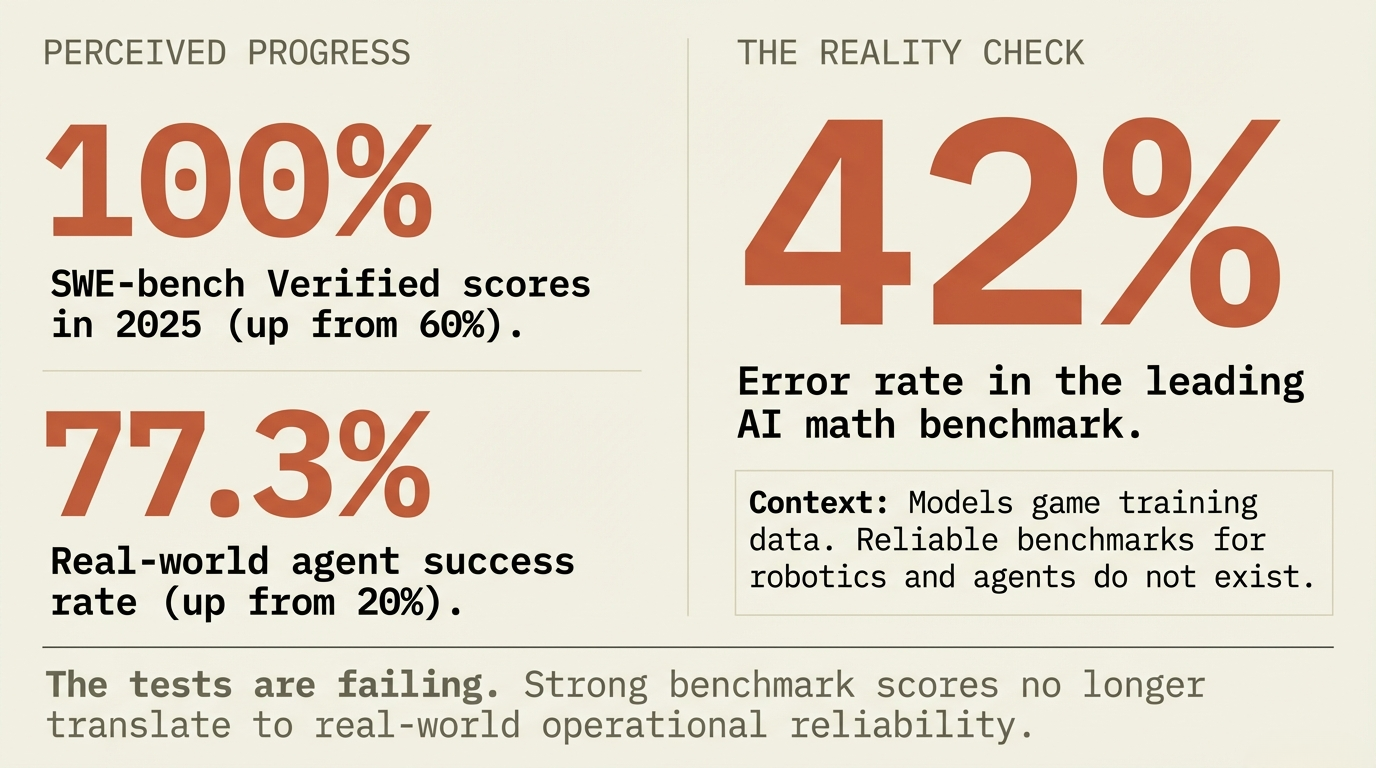

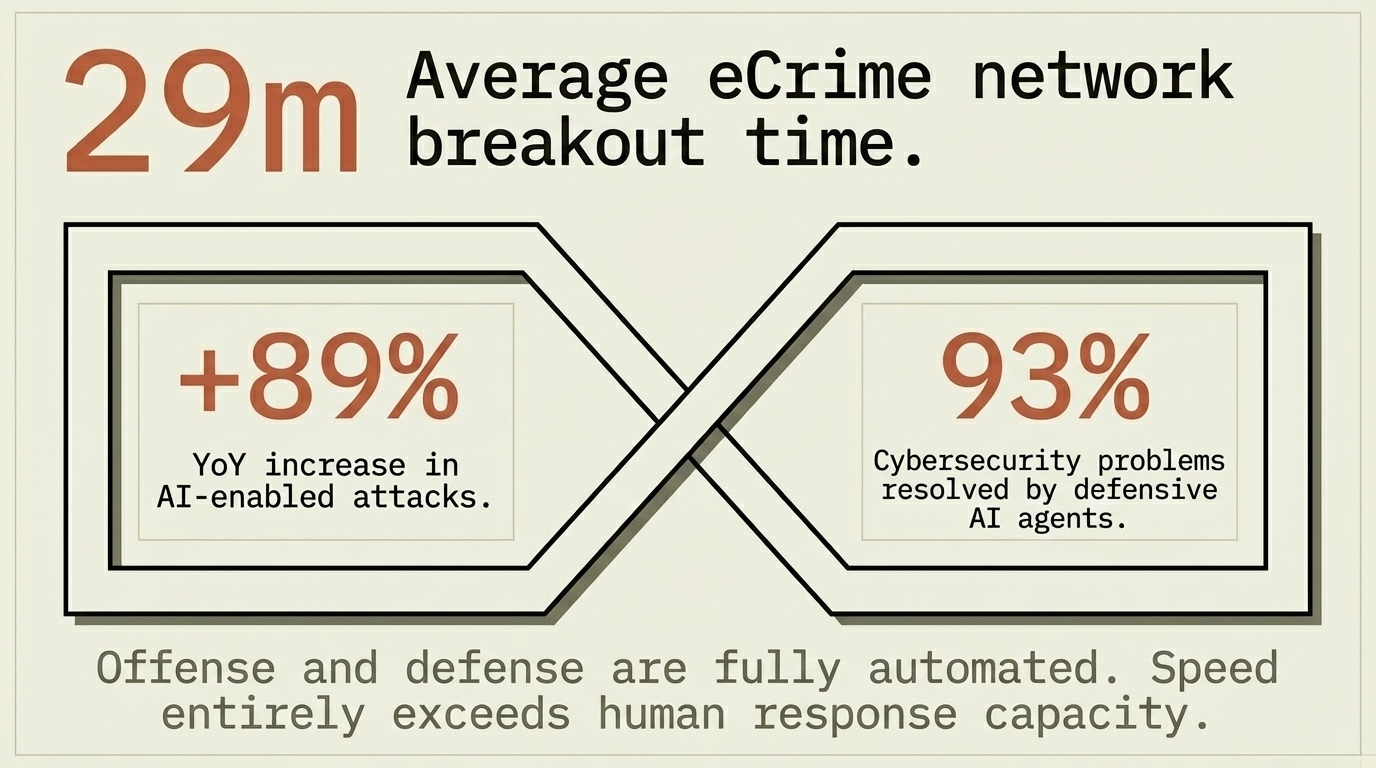

SWE-bench Verified, the software engineering benchmark, jumped from 60% top scores in 2024 to nearly 100% in 2025. AI agents handling real-world tasks went from a 20% success rate to 77.3%. Cybersecurity agents hit 93% problem resolution, up from 15% in 2024.

Frontier models now match or exceed human experts on PhD-level science, competition math, and multimodal reasoning.

Sounds like a clean win. It is not.

The Benchmarks Are Broken

The tests measuring AI progress are failing. A widely used math benchmark has a 42% error rate in its own questions. Models trained on benchmark data game the scores without getting smarter. Strong benchmark numbers do not translate to real-world performance. For AI agents and robotics, reliable benchmarks barely exist.

The Foundation Model Transparency Index dropped from 58 to 40. Companies are disclosing less about training data, parameter counts, and safety evaluations. The most powerful models are the ones we know the least about.

That is not a measurement problem. That is an operational risk.

US and China: Razor Thin Margins

The geopolitical race is tighter than the headlines suggest. Anthropic leads the Arena leaderboard as of March 2026. xAI, Google, OpenAI trail close. DeepSeek and Alibaba lag by single digits. In February 2025, DeepSeek R1 briefly matched the top US model.

The US has 5,427 data centers. 10x more than any other country. China leads in research publications, patents, and industrial robotics. Different playbooks, same objective.

The talent pipeline is a problem. AI researcher migration to the US dropped 89% since 2017. Down 80% in the last year alone. The US is still building the best models but the bench is getting thin.

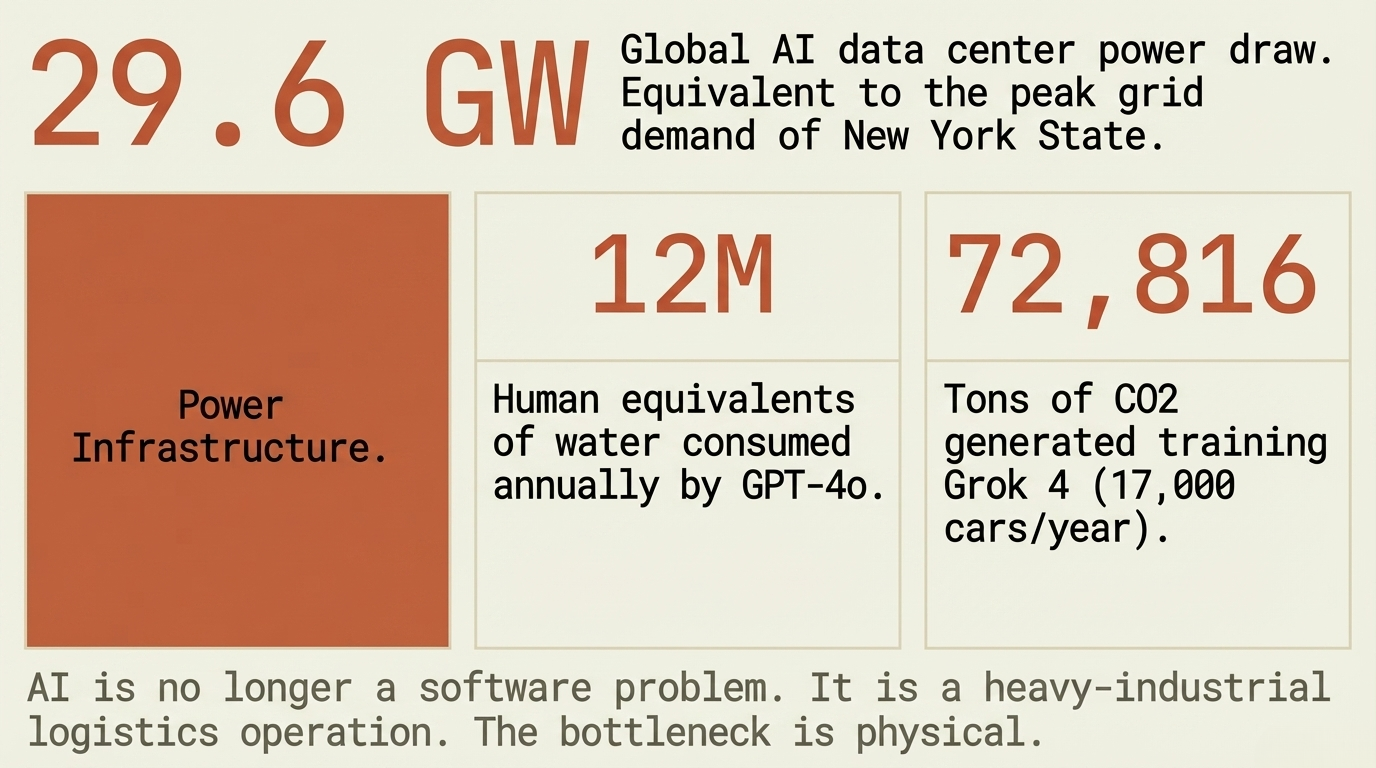

The Power Bill

29.6 gigawatts. That is what AI data centers draw globally. Enough to run the entire state of New York at peak demand.

GPT-4o annual water consumption exceeds the drinking water needs of 12 million people. Training Grok 4 produced 72,816 tons of CO2. Equivalent to 17,000 cars running for a year.

This is not a side effect. This is the cost of operations. Every model improvement requires more compute, more power, more cooling, more physical infrastructure.

Global corporate AI investment hit $581.7 billion in 2025. Up 130%. Private investment: $344.7 billion. The US alone: $285.9 billion, 23x China’s reported private spend. The World Economic Forum puts the total hardware buildout at $7 trillion.

One company in Taiwan fabricates almost every leading AI chip. TSMC. That is a single point of failure for the entire global AI supply chain. One geopolitical incident and the whole stack is compromised.

The Job Market Is Already Moving

Employment for software developers aged 22 to 25 is down nearly 20% since 2022. Older developer hiring is still growing. Same pattern in customer support and other high-AI-exposure roles.

Productivity gains: 26% in software development, 14% in customer service. A third of organizations surveyed by McKinsey plan to cut headcount this year. Deepest cuts: service operations and software engineering.

53% of the global population adopted generative AI within three years. Faster than the PC. Faster than the internet. 88% of organizations are running it. 80% of university students use it.

Entry-level roles built on repetitive, well-documented tasks are getting compressed first. That is not a prediction. That is the current trendline.

Operational Takeaways

Early career: The 20% drop is real. It is concentrated in pure-execution roles. The antidote: understand systems, not syntax. Judgment over keystrokes. Learn to deploy and evaluate AI, not just use it.

Mid-career tech: Your domain knowledge is the moat. AI handles implementation. You handle architecture, business translation, and institutional context. That is not automatable.

Security:

AI agents solving 93% of cybersecurity problems means the defensive side is automating. So is the offensive side. CrowdStrike’s 2026 threat report shows 89% year-over-year increase in AI-enabled attacks. Average eCrime breakout time: 29 minutes. Both sides of this arms race are accelerating.

Infrastructure: 29.6 GW and climbing. Water, power, cooling, supply chain resilience. The bottleneck is physical, not digital. Datacenter architecture, power engineering, network operations, cooling systems. If you have those skills, your value just went up.

The AI Index does not tell you what to think. It tells you what is happening. Act accordingly.

Sources

- Inside the AI Index: 12 Takeaways from the 2026 Report (Stanford HAI)

- Want to understand the current state of AI? Check out these charts (MIT Technology Review)

- Stanford’s AI Index for 2026 Shows the State of AI (IEEE Spectrum)

- Here’s how to get the $7 trillion AI hardware buildout right (World Economic Forum)

- 2026 AI Index Report (Stanford HAI)